AI impacts IT in ways almost no one sees and certainly nobody demos in a board meeting.

For years, enterprise IT has operated under a quiet understanding: networks don’t have to be perfect. They have to be good enough, often enough, that nobody upstairs notices. Redundancy was a best practice. Diversity was a procurement checkbox. Downtime was regrettable but explainable.

The truth is, AI puts pressure on the parts of IT you never get credit for running well — and never hear the end of when they fail. As far as most of the business is concerned, your infrastructure only matters when something goes wrong. That anonymity used to be fine. AI is changing the rules.

AI doesn’t run on “good enough most of the time.” It moves reliability from a background expectation to a foreground dependency, and the organizations that haven’t engineered for it are about to find out the hard way.

Most enterprise networks were not designed for what AI demands of them. They were designed for what the business demanded five years ago, which was mostly “don’t go down” and “stay in budget.” That was achievable. Latency tolerance was generous. Redundancy was a contingency. Scalability was a future consideration. Vendor accountability was a contract term, not a daily operating reality. AI changes the math. Not eventually. Now.

How AI Increases Enterprise Network Demand and Risk

AI increases demand – more traffic, more east-west movement between data centers, applications, data, and users, more reliance on cloud and interconnect. At the same time, it increases consequences. When a core workflow depends on AI-driven insights, downtime hits harder. Slow applications cost more. Reliability gaps that were once tolerable become material operational risk.

Most IT leaders already know the answer in principle: engineer reliability early, validate diversity, factor in scalability, close vendor blind spots, and make accountability clean when something breaks. The challenge is execution.

AI Workloads at the Edge: What Enterprise IT Actually Needs

A lot of conversations about AI infrastructure focus on hyperscale campuses and training clusters. That’s not where most enterprise IT leaders feel the pressure. The pressure shows up closer to the business. A hospital needs inference at the edge to support faster clinical decisions. A manufacturer needs computer vision integrated into production lines. A campus needs real-time analytics to support safety operations. A retailer needs demand forecasting that updates continuously rather than overnight.

Every one of those outcomes has the same underlying requirement: the network has to be predictable and scalable. Every day. During peak demand. During maintenance windows. During incidents. If your connectivity was engineered for “good enough,” AI will expose it.

Enterprise Network Reliability: Questions to Ask Before AI Exposes the Gaps

When planning for higher-stakes workloads, enterprise IT leaders tend to ask the same questions. They’re worth taking a hard look at before you need the answers.

- Do we have true physical diversity, or just logical diversity? If two circuits share a conduit, a pole line, a rail crossing, or a single carrier handoff, you don’t have real resiliency. You have a shared failure point with a different label.

- Can we prove the path, end to end? A contract promise is not the same as a validated route. You want actual visibility into how diversity is achieved so you can align it to your risk model – not take someone’s word for it.

- Who owns the outcome during an incident? Layered delivery models create layered excuses. One provider blames the other. Meanwhile your team becomes the switchboard. That’s a governance problem, not just a network problem.

- How fast can we make changes without breaking production? AI growth means constant capacity and architecture upgrades. That demands strong change management, clean handoffs, and a partner that can execute inside your governance model rather than around it.

- Can our network scale with our AI roadmap, or are we building toward a bottleneck? Most enterprise networks were sized for current workloads, not the AI ambitions being planned two to five years out. If your infrastructure isn’t growing in step with your AI initiatives – in bandwidth, in redundancy, and in architecture – the network becomes the ceiling, not the foundation.

Network Architecture Decisions That Determine Enterprise Reliability

“Reliable” is not a box you check on a provider’s product sheet. It’s a set of day-one decisions: route planning, diverse entry points, scalability, smart handoffs, clean documentation.

It’s also a people decision. When you’re on an outage bridge at 2am, you want direct access to engineers who understand the local network, the local constraints, and the fastest path to restore service. Not a portal ticket. Not a support queue. DQE’s dedicated fiber network and 24/7/365 local NOC are built for exactly that accountability model – a partner who shows up and stays accountable until it’s resolved.

How to Strengthen Your Enterprise Network Before AI Workloads Demand It

Many IT leaders treat network resiliency for AI as a future problem. It isn’t. AI is already shifting what your users and leadership expect from application performance. More uptime. Faster answers. Less tolerance for “we’re waiting on the carrier.”

You can get ahead of it without a large, disruptive initiative. Start here:

- Validate diversity at your most critical sites. Pick your top 10 locations. Confirm whether circuits share physical risk — shared laterals, shared handoffs, or shared interconnect paths are common and often invisible until something fails.

- Align network architecture to business risk. Not every site needs the same build. Some need true diverse entrances. Some need higher capacity. Some need a simpler design with a clean upgrade path. Match spend to actual risk, not to habit or historical contracts.

- Close the accountability gap. Ask one question: if a link goes down at 2am, who owns the fix end to end? If the answer involves multiple providers pointing at each other, you have a structural problem worth solving before a high-stakes incident forces the issue.

Why Enterprise Network Reliability Is Now a Business Performance Metric

AI makes network reliability visible in ways it wasn’t before. It turns infrastructure performance into business performance. It turns diversity from a best practice into something you have to be able to prove. It makes scalability non-negotiable. It turns provider responsiveness from a soft preference into a real operating metric.

The best time to engineer resiliency is before you need it.

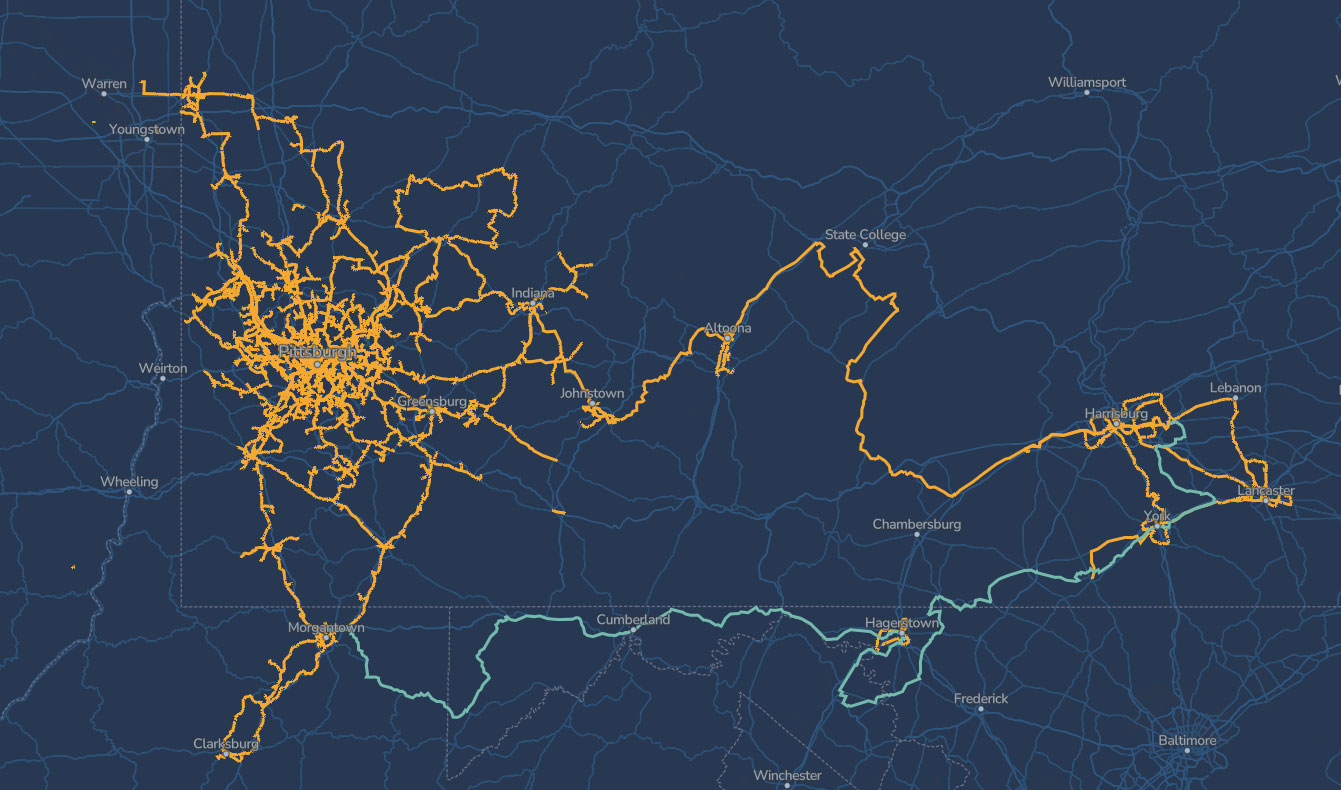

About DQE Communications

DQE Communications is a business-only fiber network provider serving enterprise organizations across Pennsylvania, West Virginia, and Ohio. DQE delivers dedicated fiber internet, Metro Ethernet, Wavelength, and Dark Fiber services purpose-built for the reliability and performance AI-driven workloads demand. DQE’s Pennsylvania-based NOC operates 24/7/365, and every account has a dedicated support team — not a portal, not a queue.

Want to validate your network’s AI readiness before a high-stakes incident forces the issue?

Talk to a DQE engineer. We’ll walk through your current architecture, identify shared-risk points, and show you where a dedicated fiber solution closes the gap.

FAQ: Enterprise Network Reliability for AI

Enterprise AI workloads require network infrastructure built for sustained, high-bandwidth throughput with near-zero latency and physical path diversity. Unlike traditional enterprise applications that tolerate intermittent slowdowns, AI-driven workflows — real-time inference, continuous analytics, edge computing — depend on consistent, predictable connectivity every hour of the day. Dedicated fiber internet with symmetrical upload and download capacity, physically diverse routing, and a single accountable provider for end-to-end performance are the baseline requirements. DQE delivers dedicated fiber infrastructure across Pennsylvania, West Virginia, and Ohio specifically engineered for the reliability AI workloads demand.

Physical network diversity means that two or more network paths travel through entirely separate infrastructure — different conduit, different poles, different rights-of-way, different geographic routes — so that a single failure cannot affect more than one path simultaneously. Logical diversity means that traffic is routed differently at the software or protocol level, but the underlying physical infrastructure may still share conduit, carrier handoffs, or common failure points. For enterprise AI workloads, logical diversity is not sufficient. If two circuits share a physical conduit or a single carrier handoff, a construction accident or equipment failure can take both down at once, regardless of how they are configured logically.

AI raises the consequences of network unreliability by making infrastructure performance directly visible as business performance. Before AI, network downtime was an IT problem that leadership noticed occasionally. When core business workflows — clinical decision support, automated manufacturing, real-time demand forecasting — depend on AI-driven outputs, a network outage or degraded latency immediately affects business operations rather than staying in the background. AI also increases baseline demand: more east-west traffic between data centers, more reliance on cloud interconnect, and more continuous data movement. Networks engineered for prior-generation demand levels are likely to show strain as AI workloads scale.

Enterprise IT leaders should ask four questions before trusting a network provider with AI workloads. First, can you prove physical route diversity end-to-end, not just logical path separation? Second, who owns the outcome during an incident — is there a single accountable team or multiple providers who will point at each other? Third, what is your actual latency at the application layer under sustained load, not just peak performance in a test environment? Fourth, how quickly can architecture changes be executed when AI growth requires capacity or routing upgrades? Providers who cannot answer these questions with specifics rather than contract language are not ready to support AI-critical infrastructure.

An enterprise network is ready for AI workloads when it can demonstrate three things: true physical diversity at every critical site, a single accountable provider or clearly defined accountability for end-to-end performance, and documented latency that meets the requirements of AI applications running on it. A practical starting point is to audit the top ten most business-critical locations and confirm whether any circuits share physical infrastructure — shared conduit, shared poles, or shared carrier handoffs are common and often undocumented. Shared physical risk at critical sites is the most common undetected reliability gap in enterprise networks today.

Network downtime matters more with AI workloads because AI has moved from a background system to an active component of business operations. When a hospital’s clinical decision support, a retailer’s demand forecasting, or a manufacturer’s computer vision system depends on real-time network connectivity, a network outage is no longer an IT incident — it is a direct business interruption with measurable operational and financial consequences. The tolerance for “planned downtime” also narrows: AI systems that update continuously cannot be paused for maintenance windows the way batch-processing systems could. Reliability gaps that were acceptable under previous workload assumptions become unacceptable once AI takes a load-bearing role in the business.

End-to-end network accountability means that a single provider owns responsibility for performance and incident resolution across the entire network path, from the customer’s equipment to the destination, without passing accountability to a third-party carrier or sub-vendor during an outage. In layered delivery models, where a local provider hands off to a national backbone carrier, incidents frequently generate escalations between providers rather than coordinated resolution — and the enterprise IT team becomes the coordinator by default. End-to-end accountability eliminates that pattern. DQE owns and operates its regional fiber network directly, which means a single team is accountable for the circuit from installation through every incident, with no handoffs and no finger-pointing when something goes wrong.